Metadata

- Author: JP Caparas

- Full Title: Dax Raad Just Dropped the Most Honest Take on AI Productivity

- URL: https://medium.com/reading-sh/dax-raad-just-dropped-the-most-honest-take-on-ai-productivity-fd8c552b4dd7

Highlights

(View Highlight)

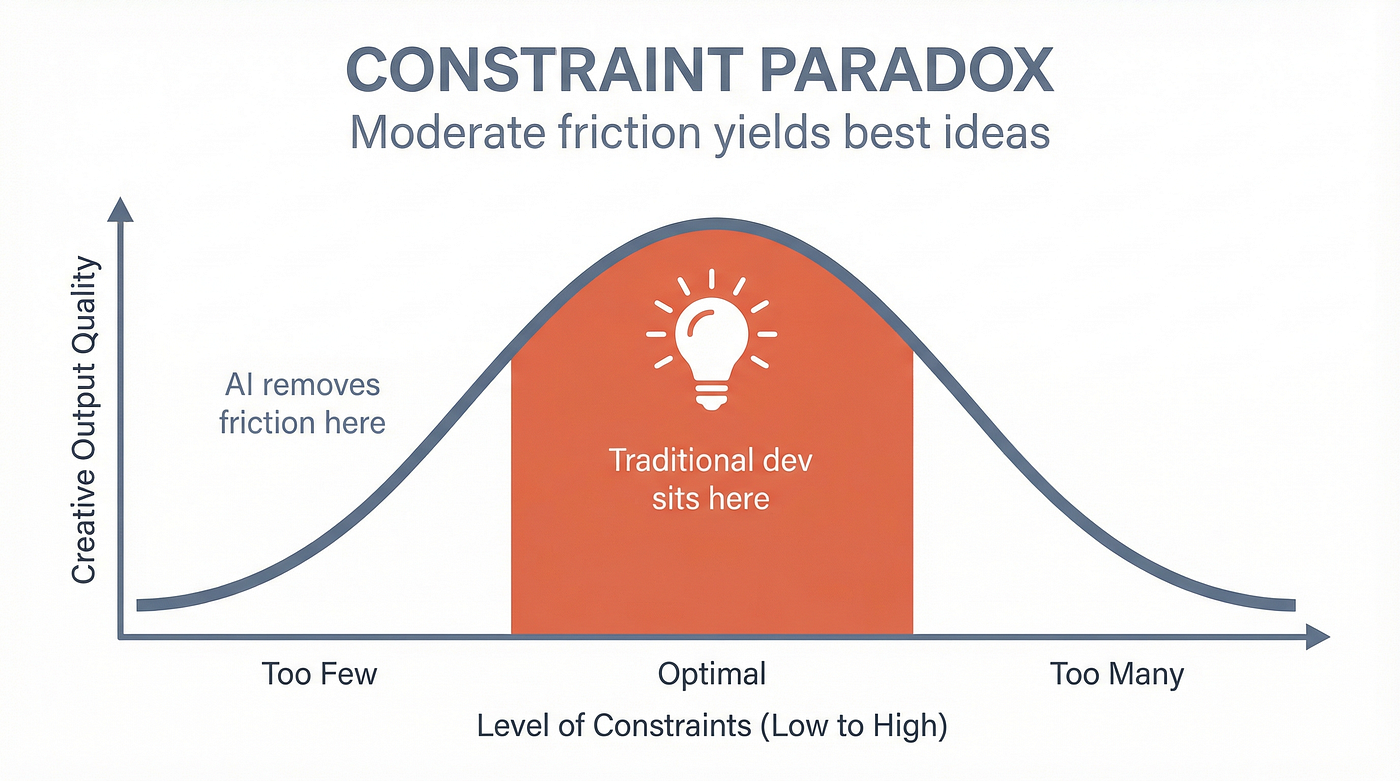

(View Highlight)- In 2019, researchers Acar, Tarakci, and van Knippenberg published a meta-review of 145 empirical studies on the relationship between constraints and creativity in the Journal of Management. Their finding? The relationship follows an inverted U-curve. Moderate constraints produce the best creative output. Too few constraints and too many constraints both kill it. (View Highlight)

- “They’re not using AI to be 10x more effective they’re using it to churn out their tasks with less energy spend.” (View Highlight)

(View Highlight)

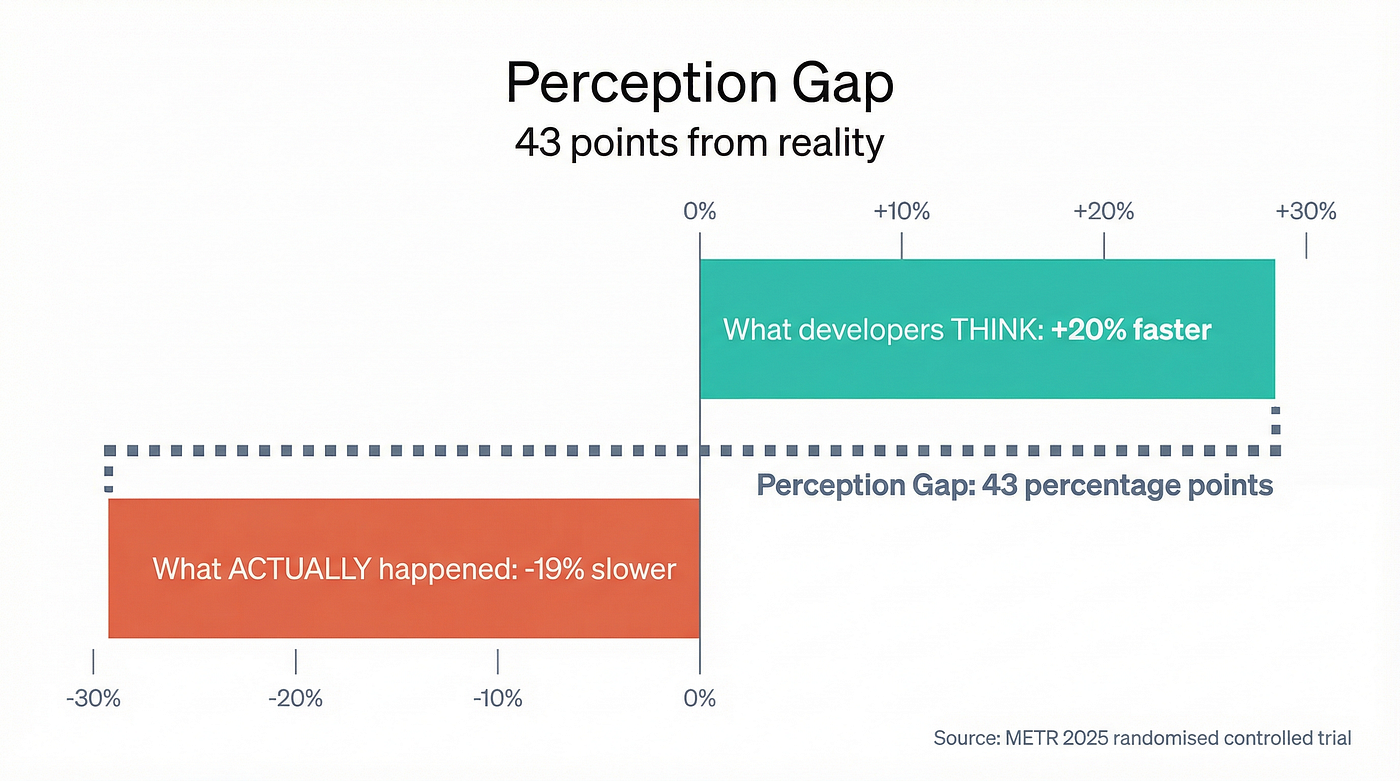

(View Highlight)- But here’s the part that should terrify anyone managing a software team: before the study, developers estimated AI would make them 24% faster. After experiencing the slowdown firsthand, they still believed they were 20% faster. The perception gap was 43 percentage points. (View Highlight)

- Developer Mike Judge (not the Silicon Valley creator) at the consultancy Substantial independently replicated this. He ran a 6-week self-experiment: estimated task duration, flipped a coin to decide whether to use AI, timed the results. (View Highlight)

- “The 2 people on your team that actually tried are now flattened by the slop code everyone is producing, they will quit soon.” (View Highlight)

- The DORA 2024 report, the most respected annual assessment of software delivery performance, backed by 39,000+ professionals surveyed over a decade, found that AI adoption was accompanied by an estimated decrease in delivery throughput of 1.5% and a reduction in delivery stability of 7.2%. (View Highlight)

- Just the truth, sitting there like a grenade with the pin already pulled. The replies were predictably split between people saying “finally someone said it” and people who felt personally attacked. You might think of it as a diatribe, but every single one of these claims is backed by peer-reviewed research, large-scale industry data, or both. I checked, so let me walk you through them. (View Highlight)

- “Your org rarely has good ideas. Ideas being expensive to implement was actually helping.”

This is the one that made people the angriest. It’s also the one with the most research behind it.

(View Highlight)

(View Highlight) - The Harvard Business Review summarised it plainly: “Individuals, teams, and organizations alike benefit from a healthy dose of constraints. It is only when the constraints become too high that they stifle creativity and innovation.”

When building a feature took two weeks of engineering time, you had to be damn sure the feature was worth building. That friction wasn’t a bug, it was the filter. Mehta and Zhu showed in a 2016 Journal of Consumer Research paper (cited 157 times since) that even thinking about having scarce resources — not actually having them, just there mere thought of it — enhanced creativity by reducing cognitive fixation. (View Highlight)

- And if you’re an engineer reading this right now, you’d nod in agreement with me. You miss the friggin’ struggle, my dude. We all do.

Jessica Abel coined the term “idea debt” to describe this exact failure mode:

Spending more time imagining how cool something will be than actually doing the hard work of making it good. (View Highlight)

- When implementation is free, idea debt explodes. Everything gets half-built. Nothing gets finished well. GitClear’s 2025 analysis of 211 million changed lines of code (from Google, Microsoft, and Meta repos) found that refactoring, the act of improving existing code rather than just writing new code (for our non-technical readers out there), dropped from 25% of changed lines in 2021 to less than 10% in 2024. (View Highlight)

- We’re building more. We’re building worse. Adam Wathan, creator of Tailwind CSS, inadvertently confirmed Dax’s point when he told Pragmatic Engineer: “My biggest problem now is coming up with enough worthwhile ideas to fully leverage the productivity boost.” The constraint has shifted from “can we build it?” to “should we build it?” And most organisations have no backbone for answering the second question. (View Highlight)

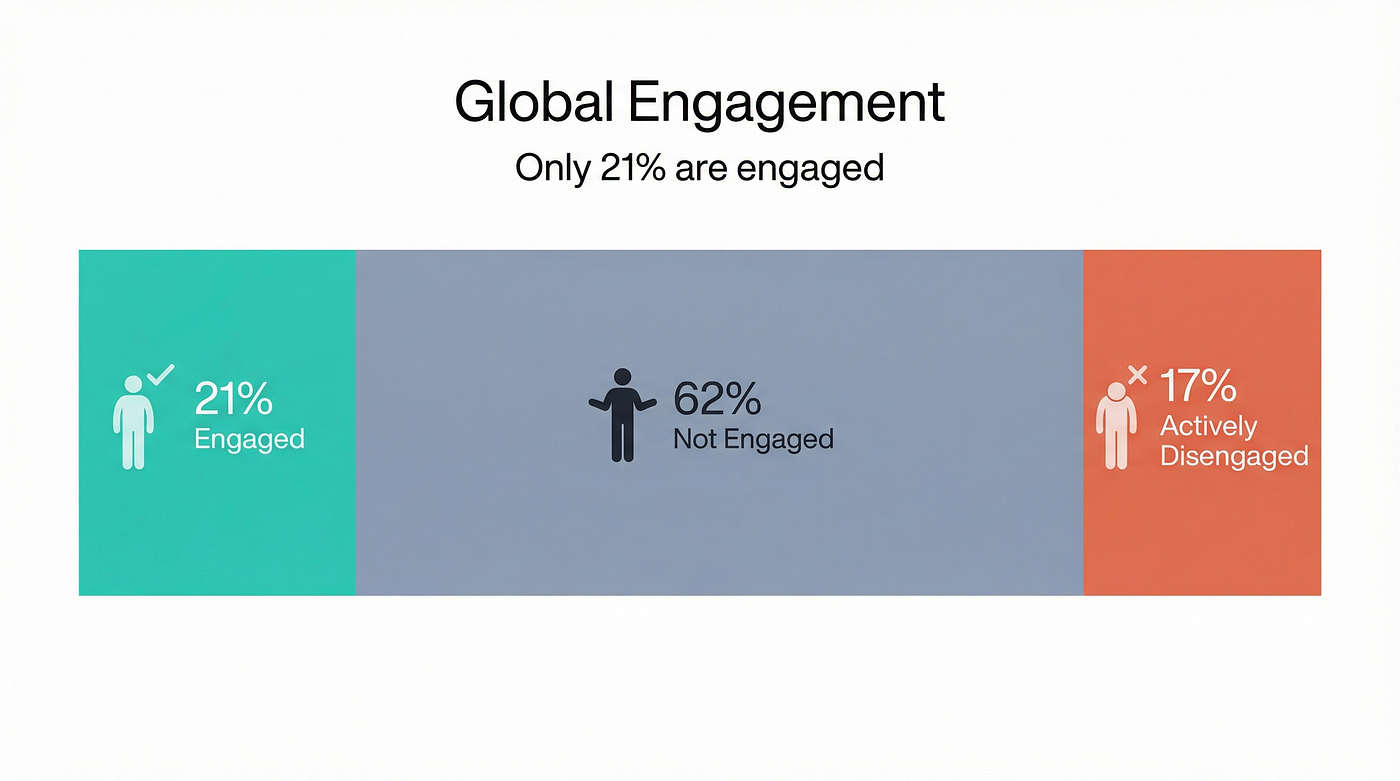

- “Majority of workers have no reason to be super motivated, they want to do their 9–5 and get back to their life.”

This is Gallup talking by the way.

(View Highlight)

(View Highlight) - Gallup’s State of the Global Workplace 2025 report, their flagship annual survey of employee engagement worldwide, found that a mere 21% of employees globally are engaged at work.

62% are “not engaged” (the polite term for quiet quitting). 17% are “actively disengaged” (the polite term for working against you).

79% of the global workforce is somewhere between “doing the minimum” and “actively sabotaging things.” This isn’t new. It’s been roughly this bad for decades. That means 79% of the global workforce is somewhere between “doing the minimum” and “actively sabotaging things.” (View Highlight)

- It’s been roughly this bad for decades. The 2024 number actually dropped 2 percentage points from 2023, matching the decline seen during COVID. In the US specifically, engagement fell to 31% in 2024, a 10-year low. Gallup calls this period “The Great Detachment.” Now add AI tools to this picture. What does a disengaged worker do when you hand them a tool that makes it easier to produce output with less effort? They use it to produce the same output with less effort. (View Highlight)

- They don’t become 10x engineers. They become the same engineer running at half speed while looking twice as busy. The EY Work Reimagined Survey 2025 confirmed it: 88% of employees use AI at work, but only 5% use it in advanced ways. The other 83% are doing basic search and summarisation. That’s akin to using a Formula 1 car to drive to the grocery store. Herbert Simon won the Nobel Prize in Economics for describing exactly this behaviour. He called it “satisficing”, the natural human tendency to seek solutions that are good enough rather than optimal. When AI makes “good enough” easier to reach, most people stop there. The maths is straightforward: same quality, less effort, everyone goes home on time. (View Highlight)

- AI slowed him down by a median of 21%.

His quote belongs in a frame:

“You remember the jackpots. You don’t remember sitting there plugging tokens into the slot machine for two hours.” Noy and Zhang published in Science in 2023 that ChatGPT “largely substitutes for worker effort rather than complementing workers’ skills.” High-ability workers maintained quality while getting faster. Yes, they pocketed the time savings. Low-ability workers saw quality improve because AI was doing their thinking for them. Neither group was maximising output. Both were satisficing. (View Highlight)

- 2025 Word of the Year: Slop The word was originally 4chan slang that programmer Simon Willison championed into the mainstream in mid-2024. It means (View Highlight)

- Merriam-Webster noted the term’s tone was “less fearful, more mocking.” Which is appropriate, because the code quality data is genuinely mockable. CodeRabbit analysed 470 pull requests and found AI-assisted PRs contain 1.7x more issues than human-authored ones. At the 90th percentile, the gap widens to 2x. Correctness issues were 1.75x higher. Security issues 1.57x higher. The only category where AI performed better was spelling. (View Highlight)

- Your best people aren’t blind. They see the slop. They see their review queue tripling. They see junior devs shipping AI-generated code that takes longer to review than it would have taken to write from scratch. And they update their LinkedIn.

Kin Lane, a 35-year industry veteran, put it plainly: “I don’t think I have ever seen so much technical debt being created in such a short period of time during my 35-year career in technology.” (View Highlight)

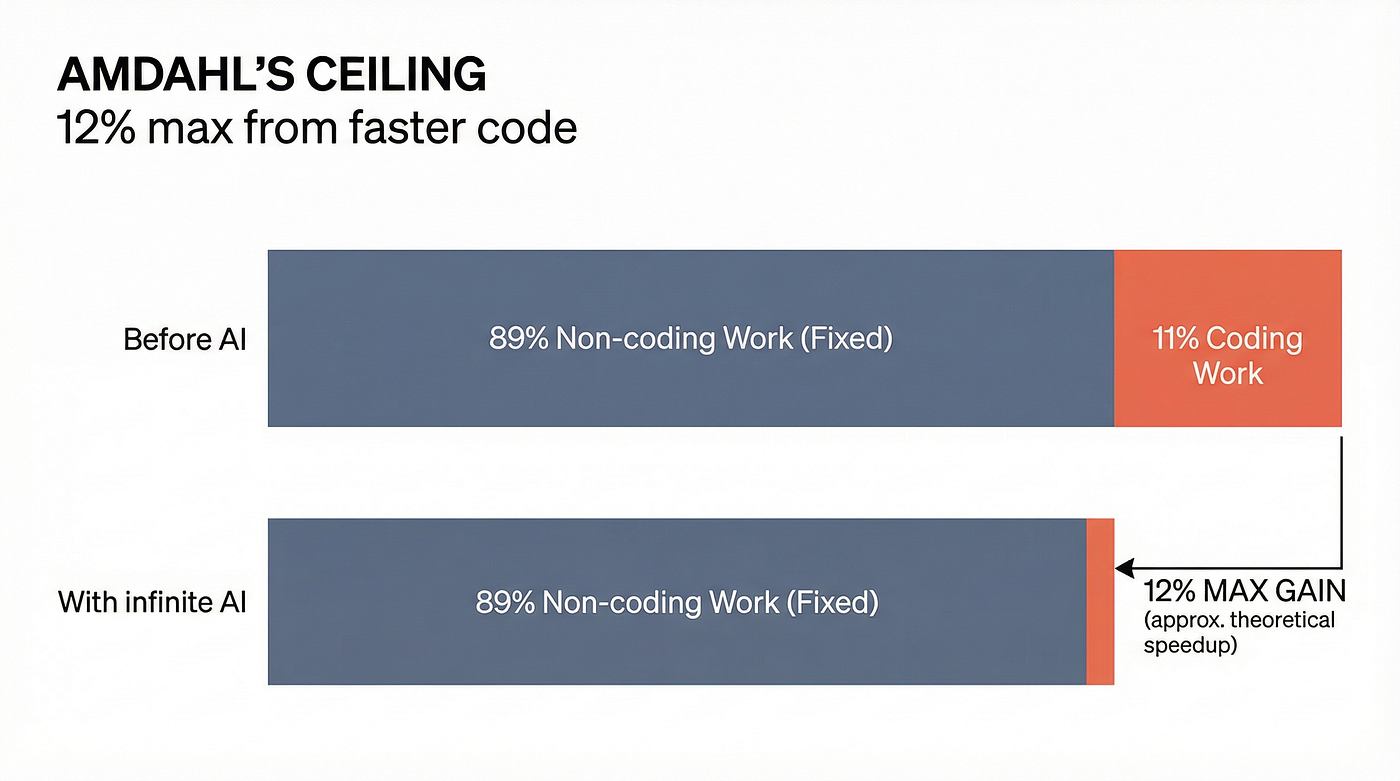

- “Even when you produce work faster you’re still bottlenecked by bureaucracy and the dozen other realities of shipping something real.”

This is Amdahl’s Law, and it’s been staring us in the face since 1967.

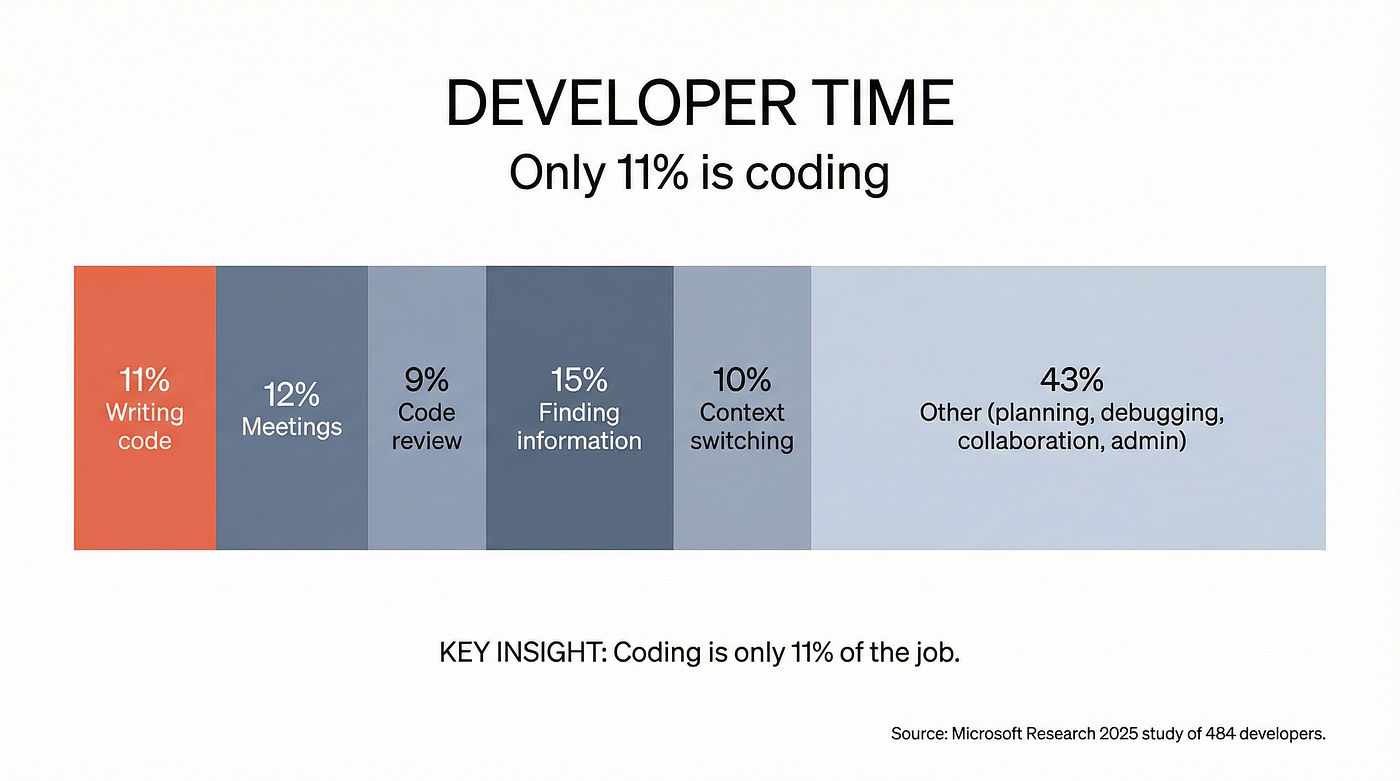

Amdahl’s Law states that the overall speedup from optimising one part of a system is limited by the fraction of time that part actually takes. If coding is only 11% of a developer’s work (which is what Microsoft Research found in their 2025 “Time Warp” study of 484 developers), then even making coding infinitely fast yields a maximum theoretical speedup of just 12%. (View Highlight)

Amdahl’s Law states that the overall speedup from optimising one part of a system is limited by the fraction of time that part actually takes. If coding is only 11% of a developer’s work (which is what Microsoft Research found in their 2025 “Time Warp” study of 484 developers), then even making coding infinitely fast yields a maximum theoretical speedup of just 12%. (View Highlight) - Twelve percent. That’s the ceiling. Not the floor, not the average. The ceiling that you hit if AI wrote code at the speed of light.

Fred Brooks told us this in 1975. “No Silver Bullet” told us this in 1986. Joel Spolsky wrote about it in 2000. The bottleneck has never been typing speed. (View Highlight)

- They were all screaming at us then and still continue to scream at us for not paying attention. The GitHub repo “typing-is-not-the-bottleneck” has been making the same point since 2009. Rob Bowley wrote a comprehensive historical analysis in January 2026 concluding that *“*coding has never been the governing bottleneck in software delivery. Not recently. Not in the last decade. And not across the entire history of the discipline.” (View Highlight)

- “Your CFO is like what do you mean each engineer now costs 39 per user per month. For a 100-developer team, that’s 20/month, maybe an AI-powered code review tool, an AI testing tool, API calls to Claude or GPT for ad hoc tasks. Stack Microsoft 365 Copilot at 2,000 per engineer per month isn’t a stretch. It’s arguably conservative. (View Highlight)

- Dax’s punchline lands because CFOs are already asking exactly this question. The Emburse/Talker Research survey of 1,500 finance and IT leaders found that 58% say AI purchases are easier to approve than any other software category, and 62% admitted to linking at least one software purchase to an AI initiative specifically to get budget approval. AI has become, in their words, “the budgeting loophole of 2025.” But the returns? BCG’s 2025 Center for CFO Excellence survey found a median AI ROI of just 10%, with nearly one-third of finance leaders seeing limited or no gain. Gartner describes 2026 as a “trough of disillusionment” year for AI, where the focus shifts from enthusiasm to measurable returns. (View Highlight)

-

MIT Media Lab reported that 95% of organisations see no measurable return on their AI investment. Gartner predicted 30% of GenAI projects would be abandoned by end of 2025. The actual abandonment rate hit 42%. IDC predicts that by 2028, 100% of Global 100 companies will spend at least $2 million per year on AI governance software alone. Not on the AI tools themselves. On the software to manage the AI tools. Hilarious. Your CFO is not laughing at this tweet. Your CFO is forwarding it to the CTO. (View Highlight)

- Every bullet point in his tweet maps to a body (View Highlight)

- The code quality decline is documented across 211 million lines of code. The bureaucracy bottleneck has been described in the software engineering literature since 1975. And the CFO concern is showing up in every enterprise survey from BCG to Gartner to Emburse. But what Dax is pointing at isn’t that AI is useless. It’s that AI is a tool, and tools amplify whatever system they’re dropped into. (View Highlight)

- Drop a chainsaw into a well-organised logging operation and you get efficiency.

Drop it into a room full of people who were already struggling to coordinate, who are mostly disengaged, who weren’t coding well in the first place, and who work in an organisation that can’t ship because of twelve layers of approval, and you get exactly what Dax described.

(View Highlight)

(View Highlight)